In this and upcoming blog posts, we will create a pipeline in Azure DevOps for a LUIS language model. In the pipeline, we will subject the model to various types of tests, after which we will ‘build’ and deploy it to an Azure environment.

The LUIS model will eventually be used in a chatbot to recognize user goals (intents) in sentences. For simplicity, we will only cover intent recognition, we will not concern ourselves with entity recognition.

The model will be developed in the LUIS portal by data scientists, data analysts or other persons with domain knowledge. In this and upcoming blogposts I will refer to them as the authors of the model. When the model performs as desired, it will be exported by the author(s) and transferred to the developers. From that moment on we can start applying DevOps to the LUIS model. The file can be included in source control, automated tests can be performed on it and it can be deployed to one or more environments.

In this first blog post, we look briefly at the workflow of the authors and the resources they use. In the following posts, we will go into more detail about DevOps for LUIS and the Azure DevOps pipeline.

The series consists of the following blog posts:

- DevOps for LUIS - part 1: Author workflow

- DevOps for LUIS - part 2: Files, Azure resources, LUIS DevOps basics

- DevOps for LUIS - part 3: Azure DevOps pipeline, Pull request and build stage

- DevOps for LUIS - part 4: Azure DevOps pipeline, Deploy stages

So much for a brief introduction. Time to get started.

Author resources #

Naturally, an environment must first be created in which the authors can do their work. Usually the authors will have little or no programming knowledge. We therefore assume that they will use the LUIS portal to develop the model, because programming skills are not required to use it. A LUIS app created in the LUIS portal is always linked to an Azure LUIS Authoring resource. This will therefore have to be created first.

LUIS Authoring resource #

The LUIS Azure resources can be divided into Authoring and Prediction resources. LUIS apps can be created and managed in the authoring resources. Multiple versions can be created for a LUIS app. Such a version contains all the information to train a language model, after which it can eventually be published to a prediction resource. The prediction resource is used by applications to get predictions (about intents and entities) from the model.

For the authors, we create a one-off and isolated LUIS authoring resource with a LUIS app with which they can develop and test a model. This resource is fully managed by them and is separate from the pipeline yet to be developed.

Let’s start by creating the authoring resource in a new resource group:

az group create -l {region} -n {name}

az cognitiveservices account create -n {name} -g {resource-group-name} -l {region} --kind LUIS.Authoring --sku F0

LUIS portal #

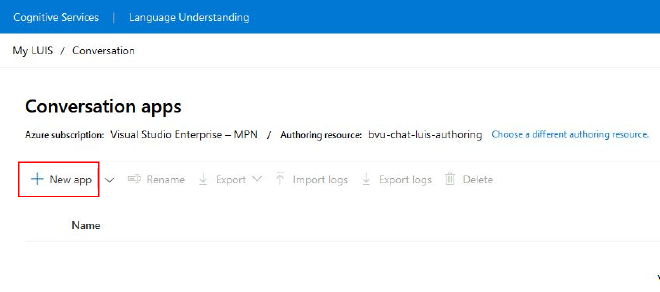

Go to the LUIS portal (for Europe: http://eu.luis.ai) and select the newly created resource as Authoring resource on the Conversation apps screen. Then click on New app to create a new LUIS conversation app and its first version (default v0.1).

Once the app has been created, the authors can get started with it via the Build page. Here, among other things, the intents and entities can be created. Based on this, the language model can be trained and tested. If the model performs as desired, it can be exported.

To do this, go to Manage > Versions, select the desired version of the app, click Export and select Export as LU.

Handover #

The author now hands the exported LU file over to a developer who can add it to source control.

The Azure DevOps pipeline then takes care of testing, building and deploying the language model. We are going to take a detailed look at this process in the upcoming posts.

The newly deployed version can then (possibly automatically) be cloned to a new version. This new version is then used by the authors to further develop the language model.